I do look at other languages when designing and implementing a new shell and a language called NGS. As time goes by, there are more languages to look at and learn from. Hence, I think creating a language in recent years is easier than ever before. I would like to thank authors and contributors of all the languages!

NGS is heavily based on ideas that already existed before NGS. Current languages provide many of them and work on NGS includes sorting out which of these ideas resonate with my way of thinking and which don’t.

Of course NGS does have it’s own original ideas (read: I’m not aware of any other language that implemented these ideas) and they are critical to NGS, creating NGS is not just filtering others’ ideas 🙂

Some stolen ideas inspired by other languages

- Multi-methods & kind-of-object-orientation in NGS – mostly from CLOS

- External commands execution and redirection syntax – bash (while changing behaviour of the

$expansion, now known to be source of many bugs). - Anonymous functions and closures – not sure where I got it from, I’d guess Lisp.

- Declarative primitives concept – heavily based on basic concept behind configuration management tools

- inspect() – Ruby‘s inspect()

- code() – the

.perlproperty from Perl. Not fully implemented in NGS. Should return the code that would evaluate to the given value. Probably comes from Lisp originally which usually prints the values in a way it can read them back. - Ordered hash (When you iterate a Hash, the items are in the same order as they were inserted) – PHP, also implemented in Ruby and if I’m correct in V8.

- The

inandnot inoperators – Python. - Many higher order functions such as

mapandfilterprobably originated in Scheme or Lisp. - Translate anything that possible into function calls.

+operator for example is actually a function call – Python, originally probably from Scheme or Lisp.

Ideas trashed before implementation

While working on NGS I think about features I can add. I have to admit that many of these candidate features seem fine for a few seconds to a few minutes… till I realize that something similar is already implemented in language X and it does not resonate with my way of thinking (also known as “This does not look good”).

Square brackets syntax for creating a list

Suppose we have this common piece of code:

list = []some_kind_of_loop {...list.push(my_new_item) ...}

In this case it would advantageous to express the concept of building a list with a special syntax so it would be obvious from a first glance what’s happening. Since array literal already had [1,2,3,...] syntax, the new building-a-list syntax would be [ something here ]. And that’s how we get list comprehensions in Python (also in other languages). The idea is solid but when I see it implemented, it’s very easy for me to realize that I don’t like the syntax. List comprehension syntax is the preferred way to construct lists in Python and seems like it’s being widely used. I’d like to make clear that I do like Python and list comprehensions is one of the very few things I’m not fond of.

So what NGS has for building lists? Let’s see:

# Python

[x*2 for x in range(10) if x > 5]

# NGS straightforward translation

collector

for(x;10)

if x > 5

collect(x * 2)Doesn’t look like much of an improvement. That’s because straightforward translations are not representative. The collector‘s use above is not a good example of it’s usage. More about collector later in this article.

Here are the NGS-y ways:

# Python

[x*2 for x in range(10) if x > 5]

# NGS alternatives, pick your compactness vs clarity

(0..10).filter(F(elt) elt>5).map(F(elt) elt*2)

10.filter(F(elt) elt>5).map(F(elt) elt*2)

10.filter(X>5).map(X*2)

10?(X>5)/(X*2)NGS does not have special syntax for building lists. NGS does have special syntax for building anything and it’s called collector.

Syntax for everything

Just say “no”. It is tempting at first to make syntax for all the concepts in the language or at least for most of them. When taken to it’s extreme, we have Perl and APL.

I don’t like Perl’s syntax, especially sigils, especially that there is more than one sigil, (unlike $ in bash). Note that Perl kind of admitted sigils were used inconsistently (or confusingly? not sure) so sigils syntax was partially changed in Perl 6. On the other hand, Perl 6 added sigil modifiers, called “twigil“s. I counted 9 of them.

APL has many mathematical symbols in it’s syntax. Many of them are not on a keyboard. While the language is very terse and expressive, I don’t like the syntax and the fact that typing in a program in APL is not a straightforward task. [Update: Clarification: I’m not familiar with APL as opposed to other languages mentioned in this article. I was pointed out that I was quick to judge APL. It does have mathematical symbols but it actually doesn’t have much syntax. Most of the symbols are just built-in functions and operators. I’ve also found out one symbol for “go to line” and one for “define function”/”end function”. Actually APL syntax rules are interesting “think different” approach as they are implementation of mathematical notation. Thanks for the link, geocar.]

I do agree with Python’s developers reluctant attitude towards adding new syntax, especially new characters. Perl appears to be on the other end of the spectrum.

I’d like to come back to the collector syntax and show that even when adding a syntax, it’s advantageous to add less of it and reuse existing language concepts if possible.

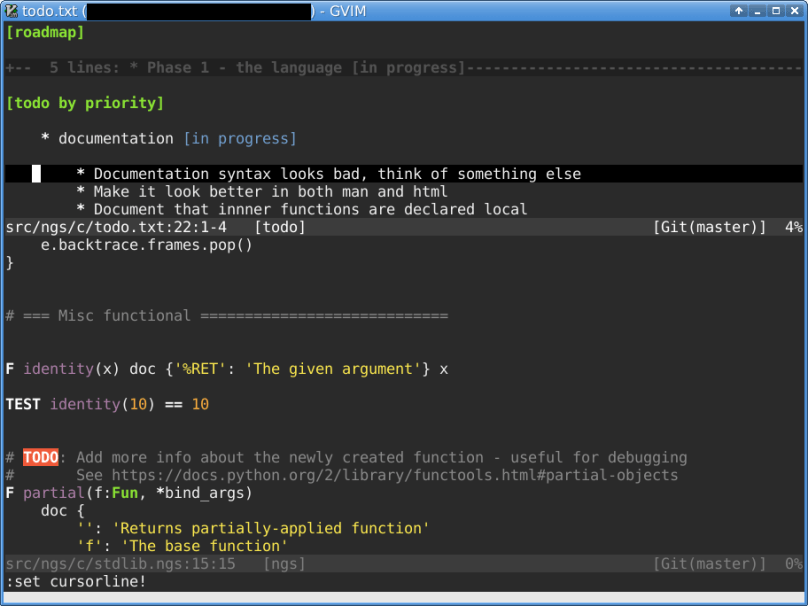

The initial thought was to base the collector syntax on Lisp’s (loop …) macro , specifically the macro’s collect keyword: (loop ... (collect ...) ...). I was about do add two new keywords: collector and collect. The code would look like this:

mylist = collector

for(x;10)

if x > 5

collect x * 2I’ve started considering implementation strategies and came up with the idea that it would be simpler to have one keyword only, collector. collector wraps single expression to the right (which can be a { code block }) in a function with one argument named collect. The code becomes this:

mylist = collector

for(x;10)

if x > 5

collect(x * 2)When the collector expression is evaluated, the wrapped code runs with provided collect function. When collect is a function and not a special syntax it allows to use all the facilities that work with functions. Example: somelist.filter(...).each(collect) .

There is more to collector! It has optional initialization argument. Let’s see it in the following example:

collector/0arr.each(F(elt) {if predicate(elt)collect(1)})

The code above counts number of elements in arr which satisfy the predicate. That’s roughly how standard library’s count() is implemented.

When collector is not followed by / (slash) and initialization value, the default initialization argument is newly created empty list. The default initial value was chosen to be empty list as it appears that most uses of collector

Advantages of the implementation where collect is a function and not a keyword:

- Simpler implementation

- Collector behaviour customization – there is a way to provide your own

collectfunction for your types which I will not describe here. Standard library defines such functions for array, hash and integer types. - Functional facilities available for the

collectfunction collectfunction can have more than one argument making it possible to collect key-value pairs if constructing a Hash:collector ... collect(k, v) ...(this is fromfilter()implementation for Hash in standard library).

I consider collector to be a good example of “less syntax is better”.

Easier, not easy

I do enjoy the situation that I have many other languages to look at for ideas, good or bad.

NGS needs original features for it’s domain (systems administration tasks niche). These domain-specific features make NGS stand out. Other languages don’t have them because they are either not domain-specific or have wider domain or are too old to have features for the today’s systems administration domain. Every original feature has a risk attached to it. If you haven’t seen it work before there is practically no way to predict how good or bad a feature will turn out. More about original features in NGS in another post.

While creating a language is now easier than before it’s still not an easy task 🙂 … but you can help. Fork, code, make a pull request.

Have a nice weekend!

Update: discussion on reddit