Quoting Steve Bourne from An in-depth interview with Steve Bourne, creator of the Bourne shell, or sh:

I think you are going to see, as new environments are developed with new capabilities, scripting capabilities developed around them to make it easy to make them work.

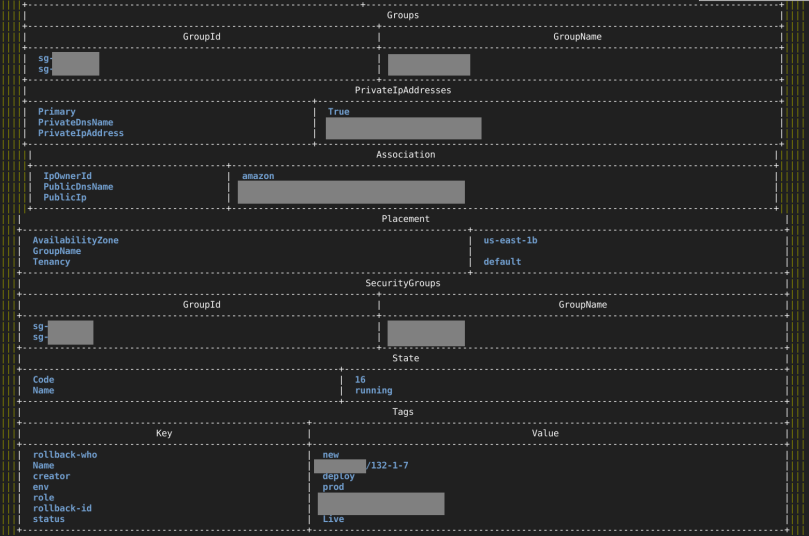

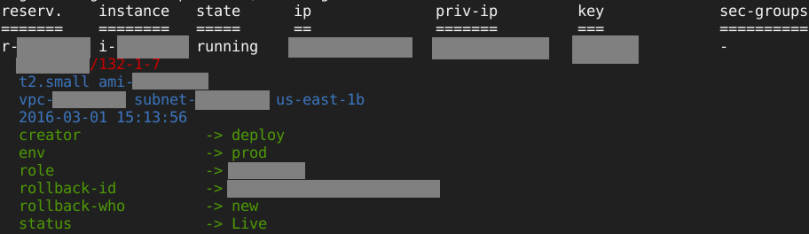

Cloud happened since then. Unfortunately, I don’t see any shells that could be an adequate response to that. Such shell should at the very least have data structures. No, not like bash, I mean real data structures, nested, able to represent a JSON response from an API and having a sane syntax.

In many cases bash is the best tool for the job. Still, I do think that current shells are not a good fit for today’s problems I’m solving. It’s like the time has frozen for shells while everything else advanced for decades.

As a systems engineer, I feel that there is no adequate shell nor programming language exist for me to use.

Bash

Bash was designed decades ago. Nothing of what we expect from any modern programming language is not there and somehow I get the impression that it’s not expected from a shell. Looks like years changed many things around us and bash is not one of them. It changed very little.

Looks like it was designed to be an interactive shell while the language was a bit of afterthought. In practice it’s used not just as an interactive shell but as a programming language too.

What’s wrong with bash?

Sometimes I’m told that there is nothing wrong with Bash and another shell is not needed.

Even if we assume that nothing is wrong with Bash, there is nothing wrong with assembler and C languages either. Yet we have Ruby, Python, Java, Tcl, Perl, etc… . Productivity and other concerns might something to do with that I guess.

… except that there are so many things wrong with bash: syntax, error handling, completion, prompt, inadequate language, pitfalls, lack of data structures, and so on.

While jq is trying to compensate for lack of data structures can you imagine any “normal” programming language that would outsource handling of data? It’s insane.

Silently executing the rest of your code after an error by default. I don’t think this requires any further comments.

Do you really think that bash is the global maximum and we can’t do better decades later?

Over the years there were several attempts to make a better alternative to bash.

| Project | Focus on shell and shell UX | Powerful programming language |

| Bash | No | No |

| Csh | No | No |

| Fish shell | Yes | No |

| Plumbum | No | Yes, Python |

| RC shell | No | No |

| sh | No | Yes, Python |

| Tclsh | No | Yes, Tcl |

| Zsh | Yes | No |

You can take a look at more comprehensive comparison at Wikipedia.

Flaw in the alternatives

A shell or a programming language? All the alternatives I’ve seen till this day focus either on being a good interactive shell with a good UX or on using a powerful language. As you can see there is no “yes, yes” row in the table above and I’m not aware of any such project. Even if you find one, I bet it will have one of the problems I mention below.

Focusing on the language

The projects above that focus on the language choose existing languages to build on. This is understandable but wrong. Shell language was and should be a domain-specific language. If it’s not, the common tasks will be either too verbose or unnecessarily complex to express.

Some projects (not from the list above) choose bash compatible domain-specific language. I can not categorize these projects as “focused on the language” because I don’t think one can build a good language on top of bash-compatible syntax. In addition these projects did not do anything significant to make their language powerful.

Focusing on the interactive experience

Any projects that I have seen that focus on the shell and UX do neglect the language, using something inadequate instead of real, full language.

What’s not done

I haven’t seen any domain-specific language developed instead of what we have now. I mean a language designed from ground up to be used as a shell language, not just a domain-specific language that happened to be an easy-to-implement layer on top of an existing language.

Real solution

Do both good interactive experience and a good domain-specific language (not bash-compatible).

List of features I think should be included in a good shell: https://github.com/ilyash/ngs/blob/master/readme.md

Currently I’m using bash for what it’s good for and Python for the rest. A good shell would eliminate the need to use two separate tools.

The benefits of using a good shell over using one of the current shells plus a scripting language are:

Development process

With a good shell, you could start from a few commands and gradually grow your script. Today, when you start with a few commands you either rewrite everything later using some scripting language or get a big bash/zsh/… script which uses underpowered language and usually looks pretty bad.

Libraries

Same libraries available for both interactive and scripting tasks.

Error handling and interoperability

Having one language for your tasks simplifies greatly both the integration between pieces of your code and error handling.

Help needed

Please help to develop a better shell. I mean not an easy-to-implement, a good one, a shell that would make people productive and a joy to use. Contribute some code or tell your friend developers about this project.

https://github.com/ilyash/ngs/

I’m using Linux. I’m not using Windows and hope I will never have to do it. I don’t really know what’s going on there with shells and anyhow it is not very relevant to my world. I did take a brief look at Power Shell it it appears to have some good ideas.