How other languages treat exit codes?

Most languages that I know do not care about exit codes of processes they run. Some languages do care … but not enough.

Update / Clarification / TL;DR

- Only NGS can throw exceptions based on fine grained inspection of exit codes of processes it runs out of the box. For example, exit code 1 of

testwill not throw an exception while exit code 1 ofcatwill throw an exception by default. This allows to write correct scripts which do not have explicit exit codes checking and therefore are smaller (meaning better maintainability). - This behaviour is highly customizable.

- In NGS, it is OK to write

if $(test -f myfile) ... else ...which will throw an exception if exit code oftestis 2 (testexpression syntax error or alike) while for example in bash and others you should explicitly check and handle exit code 2 because simpleifcan not cover three possible exit codes oftest(zero for yes, one for no, two for error). Yes,if /usr/bin/test ...; then ...; fiin bash is incorrect! By the way, did you see scripts that actually do check for three possible exit codes of test? I haven’t.

- When

-eswitch is used, bash can exit (somewhat similar to uncaught exception) when exit code of a process that it runs is not zero. This is not fine grained and not customizable.

- I do know that exit codes are accessible in other languages when they run a process. Other languages do not act on exit codes with the exception of bash with

-eswitch. In NGS exit codes are translated to exceptions in a fine grained way. - I am aware that

$?in the examples below show the exit code of the language process, not the process that the language runs. I’m contrasting this to bash (-e) and NGS behaviour (exception exits with non-zero exit code from NGS).

Let’s run “test” binary with incorrect arguments.

Perl

> perl -e '`test a b c`; print "OK\n"'; echo $? test: ‘b’: binary operator expected OK 0

Ruby

> ruby -e '`test a b c`; puts "OK"'; echo $? test: ‘b’: binary operator expected OK 0

Python

> python >>> import subprocess >>> subprocess.check_output(['test', 'a', 'b', 'c']) ... subprocess.CalledProcessError ... returned non-zero exit status 2 >>> subprocess.check_output(['test', '-f', 'no-such-file']) ... subprocess.CalledProcessError: ... returned non-zero exit status 1

bash

> bash -c '`/usr/bin/test a b c`; echo OK'; echo $? /usr/bin/test: ‘b’: binary operator expected OK 0 > bash -e -c '`/usr/bin/test a b c`; echo OK'; echo $? /usr/bin/test: ‘b’: binary operator expected 2

Used /usr/bin/test for bash to make examples comparable by not using built-in test in bash.

Perl and Ruby for example, do not see any problem with failing process.

Bash does not care by default but has -e switch to make non-zero exit code fatal, returning the bad exit code when exiting from bash.

Python can differentiate zero and non-zero exit codes.

So, the best we can do is distinguish zero and non-zero exit codes? That’s just not good enough. test for example can return 0 for “true” result, 1 for “false” result and 2 for exceptional situation. Let’s look at this bash code with intentional syntax error in “test”:

if /usr/bin/test --f myfile;then echo OK else echo File does not exist fi

The output is

/usr/bin/test: missing argument after ‘myfile’ File does not exist

Note that -e switch wouldn’t help here. Whatever follows if is allowed to fail (it would be impossible to do anything if -e would affect if and while conditions)

How NGS treats exit codes?

> ngs -e '$(test a b c); echo("OK")'; echo $?

test: ‘b’: binary operator expected

... Exception of type ProcessFail ...

200

> ngs -e '$(nofail test a b c); echo("OK")'; echo $?

test: ‘b’: binary operator expected

OK

0

> ngs -e '$(test -f no-such-file); echo("OK")'; echo $?

OK

0

> ngs -e '$(test -d .); echo("OK")'; echo $?

OK

0

NGS has easily configurable behaviour regarding how to treat exit codes of processes. Built-in behaviour knows about false, test, fuser and ping commands. For unknown processes, non-zero exit code is an exception.

If you use a command that returns non-zero exit code as part of its normal operation you can use nofail prefix as in the example above or customize NGS behaviour regarding the exit code of your process or even better, make a pull request adding it to stdlib.

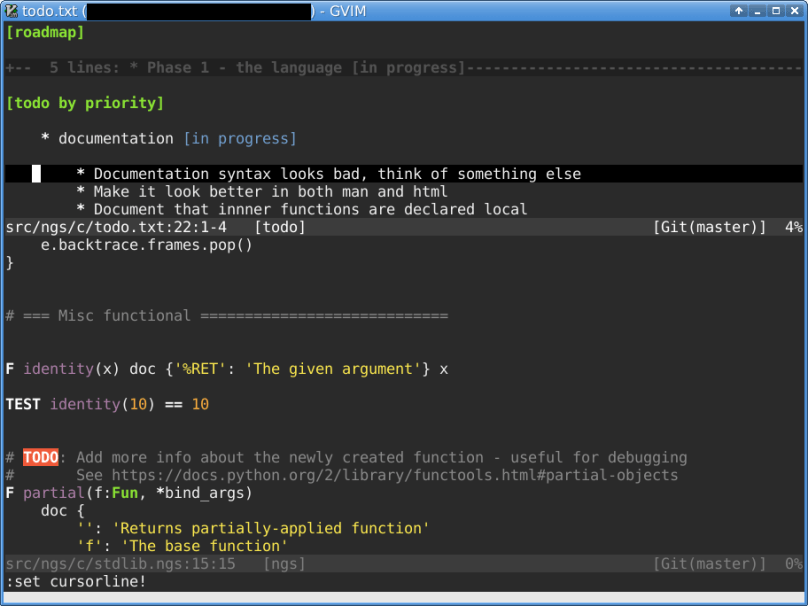

How easy is to customize exit code checking for your own command? Here is the code from stdlib that defines current behaviour. You decide for yourself (skipping nofail as it’s not something typical an average user is expected to do).

F finished_ok(p:Process) p.exit_code == 0

F finished_ok(p:Process) {

guard p.executable.path == '/bin/false'

p.exit_code == 1

}

F finished_ok(p:Process) {

guard p.executable.path in ['/usr/bin/test', '/bin/fuser', '/bin/ping']

p.exit_code in [0, 1]

}Let’s get back to the bash if test ... example and rewrite the it in NGS:

if $(test --f myfile)

echo("OK")

else

echo("File does not exist")

… and run it …

... Exception of type ProcessFail ...

For if purposes, zero exit code is true and any non-zero exit code is false. Again, customizable. Such exit code treatment allows the if ... test ... NGS example above to function properly, somewhat similar to bash but with exceptions when needed.

NGS’ behaviour makes much more sense for me. I hope it makes sense for you.

Update: Reddit discussion.

Have a nice weekend!